Papa Carder

Professional

- Messages

- 258

- Reaction score

- 247

- Points

- 43

Imagine: artificial intelligence, this technological genius, stands at the crossroads of good and evil. On one hand, it is a shield for banks, detecting suspicious transactions in seconds. On the other, it is a weapon in the hands of carders, generating fake data and automating attacks. In 2026, the role of AI in carding (credit card fraud) has become even more significant: losses from fraud exceed billions of dollars, and technology is evolving faster than laws. Let's take a closer look at this exciting duel of wits and algorithms. We draw on the latest data and trends to show how AI is changing the rules of the game.

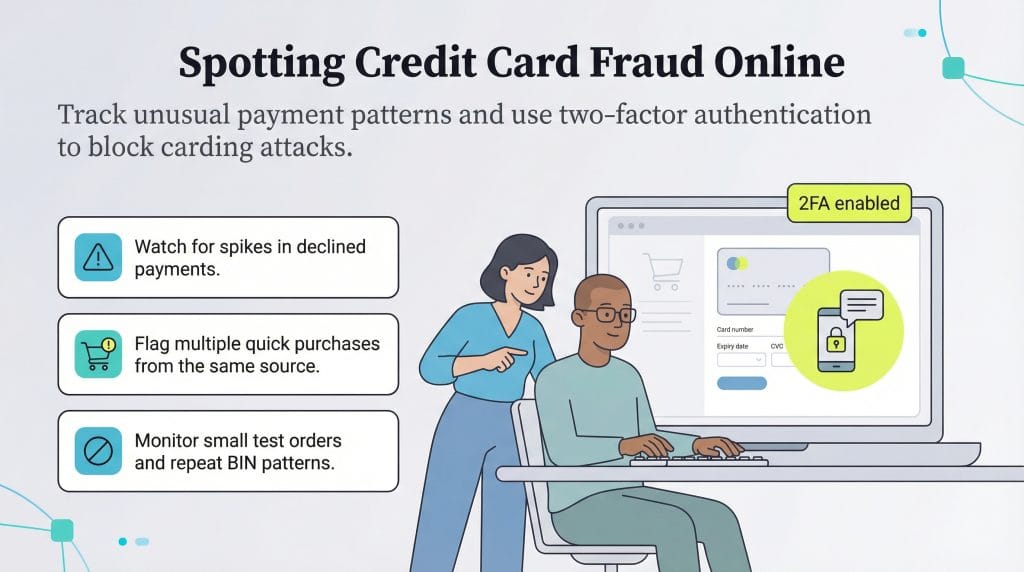

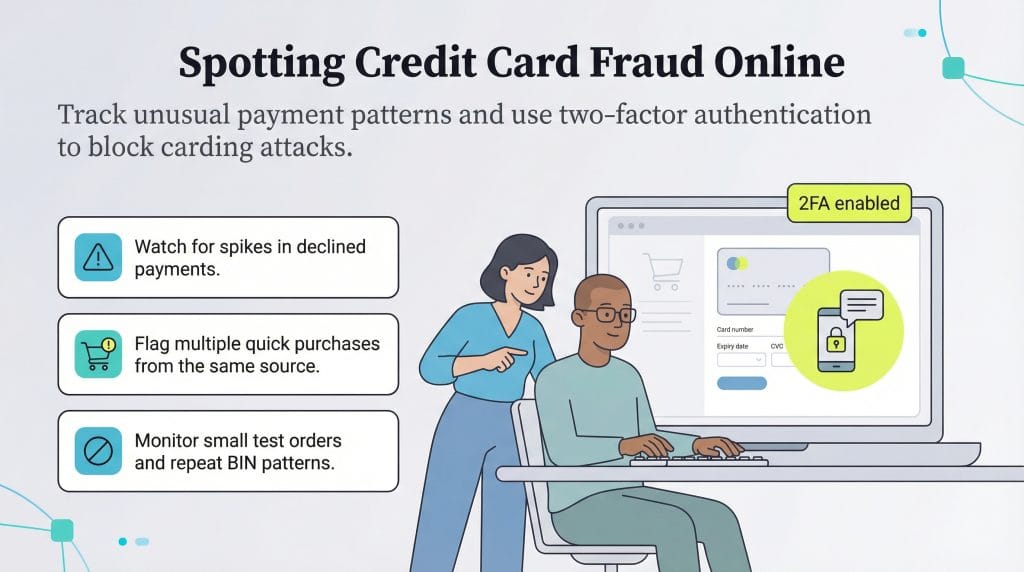

One key tactic is card testing, or "carding attacks": AI-powered bots generate thousands of potential card numbers and test them on websites, mimicking human actions to avoid detection. This results in billions in losses: brute-force attacks alone account for over $1 billion annually. AI also creates deepfakes — fake videos or voices — to deceive victims in schemes like romance scams or forged documents for account openings.

Fraud-as-a-service platforms are emerging on the darknet, where AI agents automate the entire process: from data theft to laundering through cryptocurrency. Synthetic fraud — when AI blends real data to create new identities — has become a true epidemic, with losses predicted to reach $40 billion in the US by 2027.

Machine learning (ML) and deep learning (DL) learn from historical data, adapting to new threats. Systems like Visa Protect use AI to block attacks, reducing false positives and increasing accuracy to 95%+. AI monitors bot behavior, distinguishing them from humans by click speed or patterns.

In 2024, the FTC recorded $10 billion in losses from fraud, but AI is helping save millions: Mastercard reports reduced losses thanks to real-time analysis. Frameworks like GAI-FDF (Generative AI Fraud Detection Framework) generate synthetic fraud scenarios to train models.

Table of key trends:

Research shows that AI reduces false positives, but requires ethical frameworks to avoid discriminating against users.

AI on the Scammers' Side: Automating Chaos

Carders aren't just thieves, they're real hackers, and AI makes their attacks smarter and more widespread. Generative AI (like ChatGPT models) enables the creation of convincing phishing emails, fake websites, and even synthetic identities — combinations of real data to create "virtual" personas. For example, scammers use AI to analyze public data and create personalized scam messages that trick victims into revealing their card details.One key tactic is card testing, or "carding attacks": AI-powered bots generate thousands of potential card numbers and test them on websites, mimicking human actions to avoid detection. This results in billions in losses: brute-force attacks alone account for over $1 billion annually. AI also creates deepfakes — fake videos or voices — to deceive victims in schemes like romance scams or forged documents for account openings.

Fraud-as-a-service platforms are emerging on the darknet, where AI agents automate the entire process: from data theft to laundering through cryptocurrency. Synthetic fraud — when AI blends real data to create new identities — has become a true epidemic, with losses predicted to reach $40 billion in the US by 2027.

AI as a Shield: Fraud Detection and Prevention

But it's not all doom and gloom! Banks and payment systems are using AI to counter attacks. Machines analyze billions of transactions in real time, identifying anomalies based on behavior patterns, locations, and amounts. For example, if your card is typically used in Moscow, but suddenly a purchase appears in New York, AI immediately flags it.Machine learning (ML) and deep learning (DL) learn from historical data, adapting to new threats. Systems like Visa Protect use AI to block attacks, reducing false positives and increasing accuracy to 95%+. AI monitors bot behavior, distinguishing them from humans by click speed or patterns.

In 2024, the FTC recorded $10 billion in losses from fraud, but AI is helping save millions: Mastercard reports reduced losses thanks to real-time analysis. Frameworks like GAI-FDF (Generative AI Fraud Detection Framework) generate synthetic fraud scenarios to train models.

Trends and Statistics: Where is the Battle Heading?

In 2026, AI strengthens both sides. Fraudsters: the rise of AI agents for automation, synthetic identities (98% of experts are concerned). Defenders: the anti-fraud AI market grows to $14+ billion.Table of key trends:

| Aspect | Fraudsters are using AI | Defenders use AI | Losses/Forecast |

|---|---|---|---|

| Phishing and scams | Text generation, deepfakes | Real behavior analysis | $10 billion in 2023 (FTC) |

| Card testing | Brute-force bots | Anomaly analysis | $1 billion annually |

| Synthetic IDs | Creating fake profiles | Adaptive learning | Up to $40 billion by 2027 |

| Automation | Fraud-as-a-service | Tokenization, 2FA+ | Attacks increased by 67% |

Research shows that AI reduces false positives, but requires ethical frameworks to avoid discriminating against users.