Carding 4 Carders

Professional

- Messages

- 2,724

- Reaction score

- 1,598

- Points

- 113

Neural networks remember objects in ways that humans can't.

Human sensory systems have the unique ability to recognize objects or words regardless of how they are presented. This applies both to the rotation of the object and to the character of the voice that pronounces the word. Modern Deep Neural Networks (DNN) are capable of mimicking human abilities by correctly identifying a dog's image or word, regardless of coat color or voice pitch. However, a new MIT study has shown that DNN models often respond in the same way to stimuli that are not at all similar to the original object.

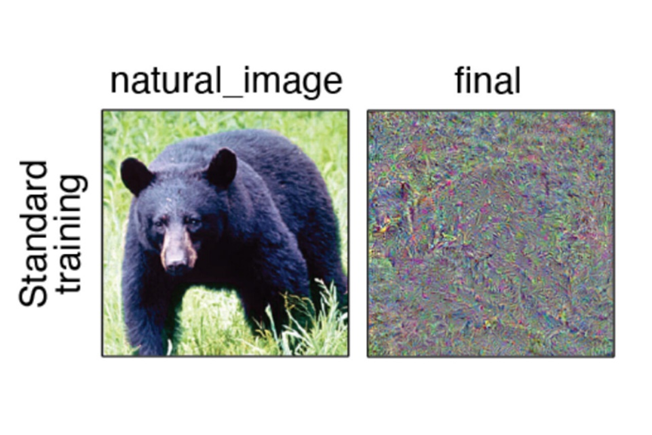

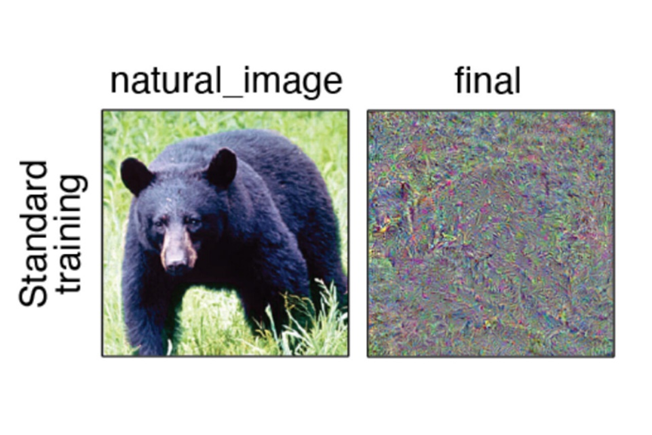

The researchers used DNN to create images or words that responded similarly to a specific input, such as a photo of a bear. Most networks created images or sounds that were unrecognizable to humans. This suggests that models form their own "invariants" - specific responses to various stimuli.

On the right is an example of what the model identified as a "bear"

The discovery offers a new way to assess how models mimic the organization of human perception. In recent years, many DNNs have been developed that can analyze millions of inputs and determine common characteristics for classifying target sounds or objects with human-like accuracy. However, the researchers found that most of the images and sounds generated by DNN were unrecognizable to humans. The images were a chaos of random pixels, and the sounds were indecipherable noise.

It was also found that the effect was the same in different models of vision and hearing, but each of these models created its own unique invariants. In other words, DNNs create their own unique "invariants" that are different from those created in humans. It is noteworthy that during the tests, the invariants of one model were not clear to the other.

Human sensory systems have the unique ability to recognize objects or words regardless of how they are presented. This applies both to the rotation of the object and to the character of the voice that pronounces the word. Modern Deep Neural Networks (DNN) are capable of mimicking human abilities by correctly identifying a dog's image or word, regardless of coat color or voice pitch. However, a new MIT study has shown that DNN models often respond in the same way to stimuli that are not at all similar to the original object.

The researchers used DNN to create images or words that responded similarly to a specific input, such as a photo of a bear. Most networks created images or sounds that were unrecognizable to humans. This suggests that models form their own "invariants" - specific responses to various stimuli.

On the right is an example of what the model identified as a "bear"

The discovery offers a new way to assess how models mimic the organization of human perception. In recent years, many DNNs have been developed that can analyze millions of inputs and determine common characteristics for classifying target sounds or objects with human-like accuracy. However, the researchers found that most of the images and sounds generated by DNN were unrecognizable to humans. The images were a chaos of random pixels, and the sounds were indecipherable noise.

It was also found that the effect was the same in different models of vision and hearing, but each of these models created its own unique invariants. In other words, DNNs create their own unique "invariants" that are different from those created in humans. It is noteworthy that during the tests, the invariants of one model were not clear to the other.