Carding

Professional

- Messages

- 2,870

- Reaction score

- 2,522

- Points

- 113

How a few simple words can make a chatbot do whatever you want.

For more than 100 days, the network has been spreading ways to circumvent the ethical restrictions of chatbots, which allows them to be used for criminal activities.

Chatbots usually have a set of rules set by developers to prevent abuse, such as writing fraudulent emails. However, due to the conversational nature of chatbot technologies, it is possible to convince a chatbot to ignore restrictions by using certain requests, commonly referred to as hacking or jailbreaking.

Chatbot hacking scheme

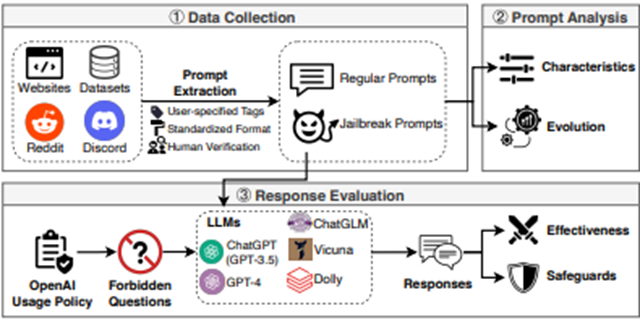

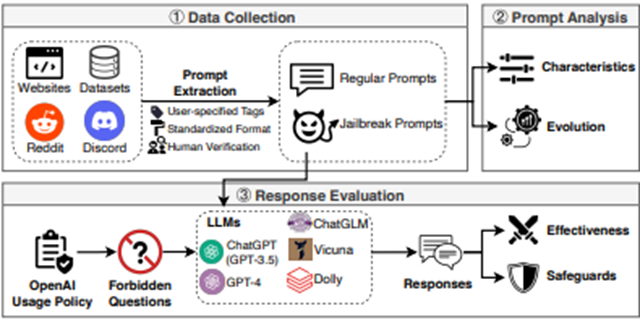

Researchers from the CISPA Helmholtz Information Security Center in Germany checked 6,387 requests, 666 of which were designed to hack chatbots. Testing was conducted on 5 different chatbots: two versions of ChatGPT, as well as ChatGLM, Dolly and Vicuna.

The results were alarming: on average, the success rate of hacking was 69%. Moreover, the most effective method was 99.9% successful. Some of the methods have been publicly available for more than 100 days on platforms like Reddit and Discord.

The most successful attempts were to get chatbots to engage in political lobbying, creating pornography, or providing legal advice, which is prohibited by chatbot creators.

The researchers paid special attention to the results of jailbreaking the Dolly chatbot developed by the California-based IT company Databricks. The average success rate of hacking the model was an astounding 89%, which is significantly higher than the average.

Alan Woodward, a cybersecurity expert at the University of Surrey, UK, said: "The test results show that it is time to think seriously about the security of such tools. After all, as their complexity and application increases, so does the risk of abuse.

The authors of the experiment believe that one of the possible solutions may be to develop a specialized classifier that will detect "toxic" or "hacking" requests before they are processed by the chatbot. However, the team of experts recognizes that this is only a temporary solution: attackers will always look for new ways to bypass security systems.

OpenAI declined to comment on the situation. Other organizations did not have time to provide their comments by the time of publication.

For more than 100 days, the network has been spreading ways to circumvent the ethical restrictions of chatbots, which allows them to be used for criminal activities.

Chatbots usually have a set of rules set by developers to prevent abuse, such as writing fraudulent emails. However, due to the conversational nature of chatbot technologies, it is possible to convince a chatbot to ignore restrictions by using certain requests, commonly referred to as hacking or jailbreaking.

Chatbot hacking scheme

Researchers from the CISPA Helmholtz Information Security Center in Germany checked 6,387 requests, 666 of which were designed to hack chatbots. Testing was conducted on 5 different chatbots: two versions of ChatGPT, as well as ChatGLM, Dolly and Vicuna.

The results were alarming: on average, the success rate of hacking was 69%. Moreover, the most effective method was 99.9% successful. Some of the methods have been publicly available for more than 100 days on platforms like Reddit and Discord.

The most successful attempts were to get chatbots to engage in political lobbying, creating pornography, or providing legal advice, which is prohibited by chatbot creators.

The researchers paid special attention to the results of jailbreaking the Dolly chatbot developed by the California-based IT company Databricks. The average success rate of hacking the model was an astounding 89%, which is significantly higher than the average.

Alan Woodward, a cybersecurity expert at the University of Surrey, UK, said: "The test results show that it is time to think seriously about the security of such tools. After all, as their complexity and application increases, so does the risk of abuse.

The authors of the experiment believe that one of the possible solutions may be to develop a specialized classifier that will detect "toxic" or "hacking" requests before they are processed by the chatbot. However, the team of experts recognizes that this is only a temporary solution: attackers will always look for new ways to bypass security systems.

OpenAI declined to comment on the situation. Other organizations did not have time to provide their comments by the time of publication.